Main paper

The main paper of SPHYNX is published in A&A 606, A78 (2017). SPHYNX: an accurate density-based SPH method for astrophysical applications (PDF - 11 MB). If you use SPHYNX, please cite this paper.

Introduction

Welcome to SPHYNX!

SPHYNX is an SPH hydrocode with its focus on Astrophysical applications.

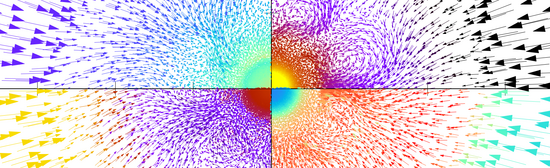

SPHYNX includes state-of-the-art methods that allow it to address subsonic hydrodynamical instabilities and strong shocks, which are ubiquitous in astrophysical scenarios. SPHYNX, is of Newtonian type and grounded on the Euler-Lagrange formulation of the smoothed-particle hydrodynamics technique. Its distinctive features are:

- the use of an integral approach to estimating the gradients;

- the use of a flexible family of interpolators called sinc kernels, which suppress pairing instability;

- and the incorporation of a new type of volume elements which provides a better partition of the unity. Unlike other modern formulations, which consider volume elements linked to pressure, our volume element choice relies in density. SPHYNX is, therefore, a density-based SPH code.

Download SPHYNX

To download SPHYNX please, fill out the form below.

This is a basic version of SPHYNX 3D. It includes a 3D gravity solver and the hydrodynamic modules. This version is prepared to simulate an Evrard Collapse.

SPHYNX is released under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

If you use SPHYNX please, cite the main paper in Astronomy & Astrophysics (A&A, 606, A78, 2017). We would also be very happy to see which exciting results you achieve with our code, so pictures, videos, and references are welcome, so that we can publish them here.

Published papers that use/acknowledge SPHYNX

Sub-stellar engulfment by a main sequence star: where is the lithium?

Cabezón, R. M.; Abia C.; Domínguez I.; García-Senz, D.;

Accepted at A&A

Axisymmetric magneto-hydrodynamics with SPH

García-Senz, D.; Wissing, R.; Cabezón, R. M.

Accepted for International SPHERIC Workshop (2022)

Conservative, density-based smoothed particle hydrodynamics with improved partition of the unity and better estimation of gradients

García-Senz, D.; Cabezón, R. M.; Escartín, J. A.

A&A 659, A175 (2022)

A Smoothed Particle Hydrodynamics Mini-App for Exascale

Cavelan, A.; Cabezón, R. M.; Grabarczyk, M.; Ciorba, F. M.

PASC20, ACM proceedings for PASC conference (2020)

Self-gravitating barotropic equilibrium configurations of rotating bodies with SPH

García-Senz, D.; Cabezón, R. M.; Blanco-Iglesias, J.M.; Lorén-Aguilar, P.

A&A 637, A61 (2020)

Two-level dynamic load balancing for high performance scientific applications

Mohammed, A.; Cavelan, A.; Ciorba, F.; Cabezón, R. M.; Banicescu, I.

SIAM proceedings of the Conference on Parallel Processing for Scientific Computing PP20 (2020)

Explosion of fast spinning sub-Chandrasekhar mass white dwarfs

Domínguez, I.; Cabezón, R. M.; García-Senz, D.

Nuclei in the Cosmos XV. Springer Proceedings in Physics, 219 (2019)

SPH-EXA: Enhancing the Scalability of SPH codes via an Exascale-ready SPH mini-app

Guerrera, D.; Cavelan, A.; Cabezón, R. M.; Imbert, D.; Piccinali, J.-G.; Mohammed, A.; Mayer, L.; Reed, D.; Ciorba, F.

arXiv:1905.03344

Detection of silent data corruptions in Smooth Particle Hydrodynamics simulations

Cavelan, A.; Cabezón, R. M.; Ciorba, F.

Proceedings for IEEE/ACM International Symposium on Cluster Computing and the Grid CCGrid'19 (2019)

Core-collapse Supernovae in the hall of mirrors. A three-dimensional code comparison project.

Cabezón, R. M.; Pan, K.-C.; Liebendörfer, M.; Kuroda, T.; Ebinger, K.; Heinimann, O.; Thielemann, K.-F.; Perego, A.

Astronomy & Astrophysics 619, A118 (2018)

Detection of silent data corruptions in Smooth Particle Hydrodynamics simulations

Cavelan, A.; Ciorba, F.; Cabezón, R. M.

Accepted for CCGrid'19

Surface and core detonations in rotating white dwarfs

García-Senz, D.; Cabezón, R. M.; Domínguez, I.

Astrophysical Journal, 862, 27 (2018)

DIAPHANE: a portable radiation transport library for astrophysical applications.

Reed, D.; Dykes, T.; Cabezón, R. M.; Gheller, C.; Mayer, L.

Computer Physics Communications, 226, 1 (2018)

An advanced leakage scheme for neutrino treatment in astrophysical simulations.

Perego, A.; Cabezón, R. M.; Käppeli, R.

Astrophysical Journal Supplement Series, 223, 22 (2016)

Type Ia Supernovae: Can Coriolis force break the symmetry of the gravitational confined detonation explosion mechanism?

García-Senz, D.; Cabezón, R. M.; Domínguez, I.; Thielemann, F. K.

Astrophysical Journal, 819, 132 (2016)

Equalizing resolution in smoothing particle hydrodynamics calculations using self-adaptive sinc kernels.

García-Senz, D.; Cabezón, R. M.; Escartín, J. A.; Ebinger, K.

Astronomy & Astrophysics, 570, A14 (2014)

High resolution simulations of the head-on collision of white dwarfs.

García-Senz, D.; Cabezón, R. M.; Arcones, A.; Relaño, A.; Thielemann, F.-K.

MNRAS, 436, 3413 (2013)

Smoothed Particle Hydrodynamics: checking a tensor approach to calculating gradients.

Escartín, J. A.; García-Senz, D.; Cabezón R. M.

Highlights of Spanish Astrophysics VII.

Proceedings of the X Scientific Meeting of the Spanish Astronomical Society (2013)

Testing the concept of integral approach to derivatives within the smoothed particle hydrodynamics technique in astrophysical scenarios.

Cabezón, R. M.; García-Senz, D.; Escartín J. A.

Astronomy & Astrophysics, 545, A112 (2012)

Improving smoothed particle hydrodynamics with an integral approach to calculating gradients.

García-Senz, D.; Cabezón, R. M.; Escartín, J.

Astronomy & Astrophysics, 538, A9 (2012)

Authors

The main authors of SPHYNX are Rubén M. Cabezón and Domingo García-Senz.

For questions, bug-reports, and/or suggestions you can contact them writting an email to:

- ruben.cabezon <at> unibas.ch

- domingo.garcia <at> upc.edu

Installation instructions and pre-requisites

Installing SPHYNX is very easy:

- After downloading SPHYNX, uncompress it with

tar xjvf sphynx-<version>.tar.bz2 - This will create a directory named SPHYNX-<version>

- Done!

In order to run SPHYNX you need a fortran compiler and an MPI library. Current version has been tested with Intel cluster compiler and GNU fortran compiler.

Additional downloads

- Documentation + short tutorial (PDF)

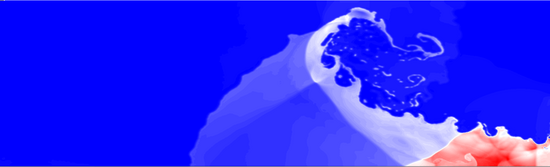

- ICs for 3D wind-cloud interaction test (zip - 110 MB)

SPHYNX takes part on the SPH-EXA project, founded by the Platform fro Advanced Scientific Computing (PASC), aiming at the development of a scalable and fault tolerant SPH co-design code that can benefit from the next generation Exascale supercomputers.

Latest news about SPHYNX

2019

03. Jul:Version 1.5.3 released. After one year of SPH-EXA, SPHYNX has benefited from a series of upgrades that have improved its scalability. In particular, the distribution of the neighbors arrays has enabled a much better usage of RAM/node. This new version uses Fortran binary I/O, which create in average files a 50% smaller than those of ASCII, being much faster to write and read. These binary files are also readable with Gnuplot v5.0 and later, so data are still directly accessible without the need of additional complex software. |

2018

06. Mar:Version 1.4 released. The code scales much better now. I added more OMP instructions to reduce the serial sections of the code. Additionally, the main change is a new way to find and store neighbors that relies on a static allocation of memory. This eliminates a huge idle time and boosts scalability and overall speed of the code. |

2017

28. Nov:Version 1.3 released. I finally decided to join hydro and gravity in one single MPI_WORLD. This makes the code more efficient in case of collapsing scenarios, where the gravity calculation gets more and more important. In the previous implementation, if the workload between hydro and gravity changes considerably during runtime, there were load balancing problems and the code was generally slower than this version. |

14. Aug:SPHYNX has a logo! Which is basically a cool font that I found, named Space Age (by Justin Callaghan), literally in page one of sci-fi fonts in 1001freefonts.com. I like it because it has some dots that resemble SPH particles, the letters are smooth (as SPH), and the X reminds me of a sketch of an accretting neutron star with a disk...

|

11. Aug:Version 1.2 released! Not big changes, apart from an OMP loop that I changed from dynamic to static schedule. This was probably lingering around from an older version of the code, and correcting it improved the overall time in the Evrard test a 10%. I also added GNU support. Not bad for just coming from holidays!

|